a— layout: NotesPage title: Reasoning About Code Safety permalink: /work_files/dev/cs/racs prevLink: /work_files/dev/cs.html —

Table of Contents

Magic Numbers and Exploitation

-

- Exploit:

- An Exploit is a piece of software, a chunk of data, or a sequence of commands that takes advantage of a bug or vulnerability to cause unintended or unanticipated behavior to occur on computer software, hardware, or something electronic.

-

- Magic Numbers:

- Exploits are very brittle in nature.

Changing one number in an exploit (program) can render it useless.

Ex. Making sure the exploit runs on the right version.

-

- EXTRABACON:

- Is an NSA exploit for Cisco ASA “Adaptive Security Appliances”.

-

- It had an exploitable stack-overflow vulnerability in the SNMP read operation.

- But actual exploitation required two steps:

- Query for the particular version (with an SMTP read).

- Select the proper set of magic numbers for that version.

-

- ETERNALBLUE(screen):

- Another NSA exploit that got stolen by the same group (“ShadowBrokers”) which stole EXTRABACON.

-

- Eventually it was very robust.

- This was “god mode”:

remote exploit Windows through SMBv1(Windows File sharing).

- This was “god mode”:

- Eventually it was very robust.

-

- But initially it was jokingly called ETERNALBLUESCREEN:

- Because it would crash Windows computers more reliably than exploitation.

- But initially it was jokingly called ETERNALBLUESCREEN:

Reasoning About Memory Safety

-

- Memory Safety:

- No accesses to undefined memory.

“Undefined” is with respect to the semantics of the programming language.

- Undefined behavior is:

* At Minimum: is a bug. * At Maximum: is exploitable.

-

- Read Access:

- An attacker can read memory that he isn’t supposed to.

-

- Write Access:

- An attacker can write memory that she isn’t supposed to.

-

- Execute Access:

- An attacker can transfer control flow to memory that they isn’t supposed to.

Reasoning About Safety

-

- How can we have confidence that our code executes in a safe fashion?:

- Approach: build up confidence on a function-by-function / module-by-module basis.

-

- Modularity:

- Modularity provides boundaries for our reasoning.

- Preconditions: what must hold for function to operate correctly.

- Postconditions: what holds after function completes.

These basically describe a contract for using the module.

- The notion of modularity apply to individual statements.

Statement-1’s postcondition should logically imply Statement-2’s precondition.

- Invariants: conditions that always hold at a given point in a function.

This particularly matters for loops.

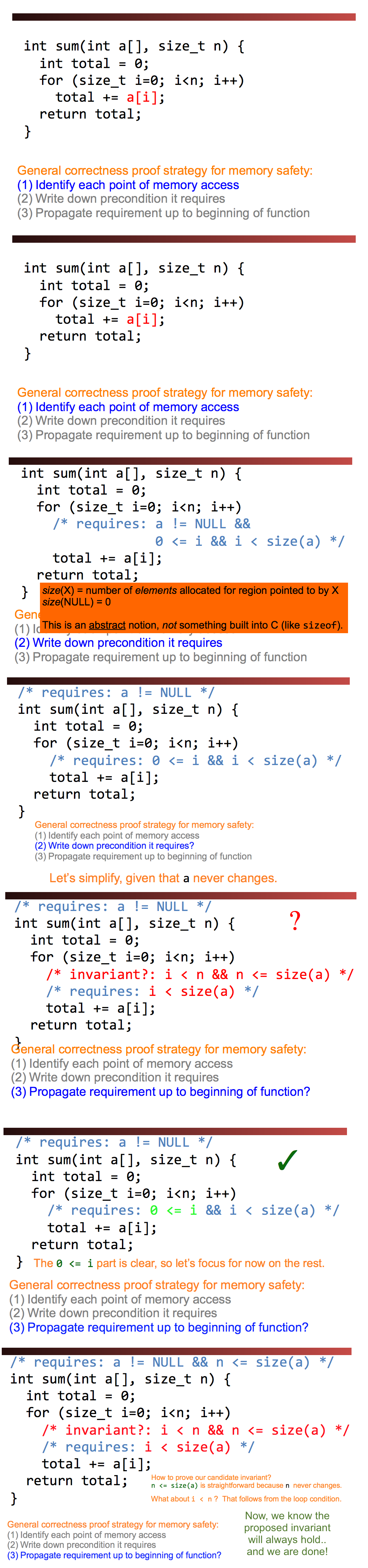

- How to Prove Memory-Safety?

- Identify each point of memory access.

- Write down precondition it requires.

- Propagate requirement up to beginning of function.

- Complicated loops might need us to use induction to show invariants:

- Base case: first entrance into loop.

- Induction: show that postcondition of last statement of loop, plus loop test condition, implies invariant.

-

- Example 1:

-

int deref(int *p) { return *p; } -

- The Pre-Condition: requires

pto not be NULL, andpa valid pointer.

\(\implies \\\)

- The Pre-Condition: requires

-

void *mymalloc(size_t n) { void *p = malloc(n); if (!p) { perror("malloc"); exit(1); } return p; }- The Post-Condition: ensures that the return value is not NULL, and is a valid pointer.

-

- Example 2:

-

int sum(int a[], size_t n) { int total = 0; for (size_t i=0; i<n; i++) total += a[i]; return total; } -

- The Pre-Condition: requires

pto not be NULL, andpa valid pointer.

- The Pre-Condition: requires

-

- Drawbacks:

- Unfortunately, the process descrived above is too tedius.

Thus, programers tend to not safe-check their code.

-

- Alternative:

- Don’t use C or C++.

- Instead, Use a Safe language:

Turns “undefined” memory references into an immediate exception or program termination. - Now you simply don’t have to worry about buffer overflows and similar vulnerabilities.

- Safe Languages: Python - JAVA - GO - RUST - SWIFT

Making C Safe [Alternatives of the Alternatives]

- Stack Canaries:

- Goal: protect the return pointer from being overwritten by a stack buffer.

- Defends-Against: Stack Over-Flows.

- Method:

- When the program starts up, create a random value.

- When returning in a function, first check the canary against the stored value.

- Drawbacks: (i.e. How to NOT kill the Canary?)

- Find out what the canary is (e.g. via an FSV) and then write its value back.

- Write around the canary.

- Overflow the Heap.

- Properties:

- A simple stack overflow doesn’t work anymore.

- Minor and nearly negligible overhead.

- It requires a compiler flag to enable on Linux.

- Non-Executable Pages:

- Goal: make pages in the TLB/Page-Table non-executable.

- Defends-Against: Injections.

- Method:

- The TLB/Page-Table has three bits R(ead)/W(rite)/X(cute).

- Maintain W xor X as a global propert.

Now, you can’t write code to the stack or heap.

- Drawbacks: (i.e. How to NOT kill the Canary?)

- Unfortunately, this is insufficient. There are multiple ways around it.

- Properties:

- A simple stack overflow doesn’t work anymore.

- Effectively no performance impact.

- Does break some code.

- Yet still often not ubiquitous on embedded systems.

e.g. Cisco ASA

- Exploits that best this defending approach:

- Return into libc: set up the stack frame such that the “return” excutes the “excute” command.

- Return Oriented Programming:

- Given a code library, find a set of fragments (gadgets) that when called together execute the desired function.

The “ROP Chain”.

- Inject onto the stack a sequence of saved “return addresses” that will invoke this.

- Given a code library, find a set of fragments (gadgets) that when called together execute the desired function.

Unfortuntely, many such Exploits are available online.

- Address Space Layout Randomization:

- Goal: Randomize the addresses of the excutables in memory.

- Defends-Against: Buffer Over-Flows.

- Method:

- Rather than deterministically allocating the process layout, the OS randomizes the starting base of each excutable section (text, data/BSS, heap, stack).

- Randomly relocate everything (Every library, the start of the stack & heap).

- Drawbacks: (i.e. How to NOT kill the Canary?)

- Find out what the canary is (e.g. via an FSV) and then write its value back.

- Write around the canary.

- Overflow the Heap.

- Properties:

- When combined with W^X, need an information leak to exploit.

A way to find the address of a function,

to find the magic offset needed to modify your ROP chain - With 64b of space you have lots of entropy, making them harder to exploit.

- It requires a compiler flag to enable on Linux.

- When combined with W^X, need an information leak to exploit.

-

- Defense-in-Depth in Practice:

-

- Apple iOS uses ASLR in the kernel and userspace, W^X whenever possible.

-

All applications are sandboxed to limit their damage: The kernel is the TCB.

-

- The “Trident” Exploit: was used by a spyware vendor, the NSO group, to exploit iPhones of targets.

- To remotely exploit an iPhone, the NSO group’s exploit had to:

- Exploit Safari with a memory corruption vulnerability.

Gains remote code execution within the sandbox: write to a R/W/X page as part of the JavaScript JIT.

- Exploit a vulnerability to read a section of the kernel stack.

Saved return address & knowing which function called breaks the ASLR

- Exploits a vulnerability in the kernel to enable code execution

- Exploit Safari with a memory corruption vulnerability.

- To remotely exploit an iPhone, the NSO group’s exploit had to:

- The “Trident” Exploit: was used by a spyware vendor, the NSO group, to exploit iPhones of targets.

Software Security Issues and Testing

- Why does software have vulnerabilities?:

- Programmers are humans, and humans make mistakes.

Use Tools.

- Programmers often aren’t security-aware.

Learn about common types of security flaws.

- Programming languages aren’t designed well for security.

Use better languages (Java, Python, …).

- Programmers are humans, and humans make mistakes.

- What makes testing a program for security problems difficult?:

- We need to test for the absence of something.

Security is a negative property!

“nothing bad happens, even in really unusual circumstances”

- Normal inputs rarely stress security-vulnerable code.

- We need to test for the absence of something.

- How can we test more thoroughly?:

- Random inputs (fuzz testing)

- Mutation

- Spec-driven design.

-

- How do we tell when we’ve found a problem?:

- When we have found a crash or other deviant behavior.

-

- How do we tell when we’ve found a problem?:

- This is a very hard task but code-coverage tools can help.

Working Towards Secure Systems

-

- Patching:

- Along with securing individual components, we need to keep them up to date.

-

- What’s hard about patching?:

- Can require restarting production systems.

- Can break crucial functionality.

- Management burden:

- It never stops (the “patch treadmill”).

- It can be difficult to track just what’s needed where.

- What’s hard about patching?:

-

- Vulnerability scanning:

- Probe your systems/networks for known flaws.

-

- Penetration testing (“pen-testing”):

- Pay someone to break into your systems. > Provided they take excellent notes about how they did it!

Approaches for Building Secure Software/Systems (Summary)

- Run-time checks:

- Automatic bounds-checking (overhead).

- What do you do if check fails?.

- Address randomization:

- Make it hard for attacker to determine layout.

- But they might get lucky / sneaky.

- Non-executable stack, heap:

- May break legacy code.

- See also Return-Oriented Programming (ROP).

- Monitor code for run-time misbehavior:

- E.g., illegal calling sequences.

- But again: what do you if detected?.

- Program in checks / “defensive programming”:

- E.g., check for null pointer even though sure pointer will be valid.

- Relies on programmer discipline.

- Use safe libraries:

- E.g. strlcpy, not strcpy; snprintf, not sprintf.

- Relies on discipline or tools.

- Bug-finding tools:

- Excellent resource as long as not many false positives.

- Code review:

- Can be very effective but expensive..

- Use a safe language:

- E.g., Java, Python, C#, Go, Rust.

- Safe = memory safety, strong typing, hardened libraries.

- Installed base? Programmer base? Performance?.

- Structure user input:

- Constrain how untrusted sources can interact with the system.

- Perhaps by implementing a reference monitor.

- Contain potential damage:

- E.g., run system components in jails or VMs.

- Think about privilege separation.