Order of Convergence

-

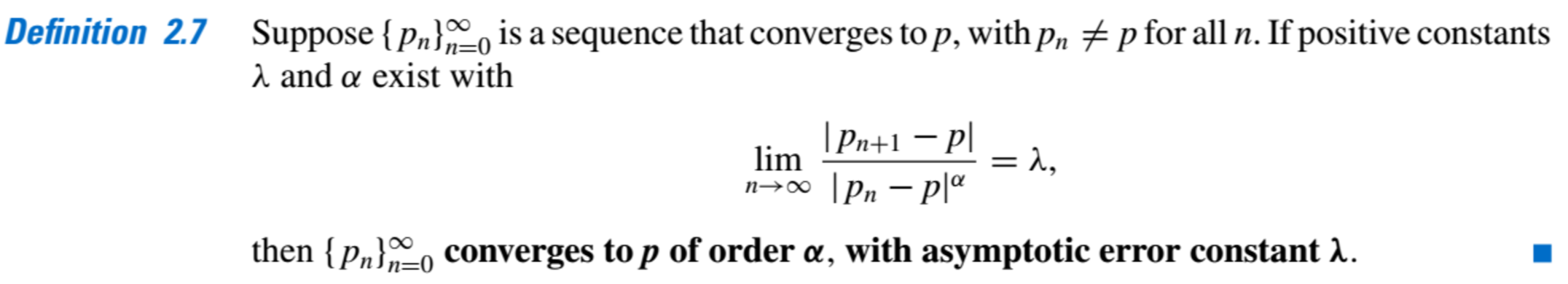

Order of Convergence:

-

Important, Two cases of order:

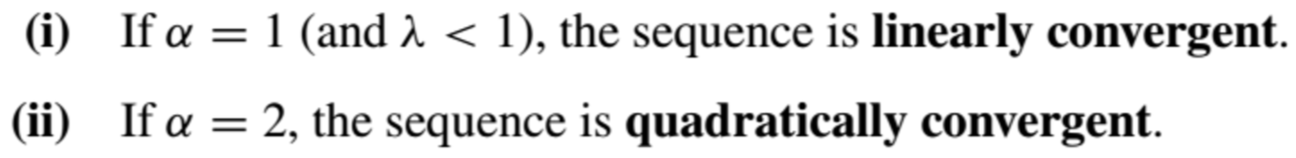

- An arbitrary technique that generates a convergent sequences does so only linearly:

Theorem 2.8 implies that higher-order convergence for fixed-point methods of the form \(g(p) = p\) can occur only when \(g'(p) = 0\).

-

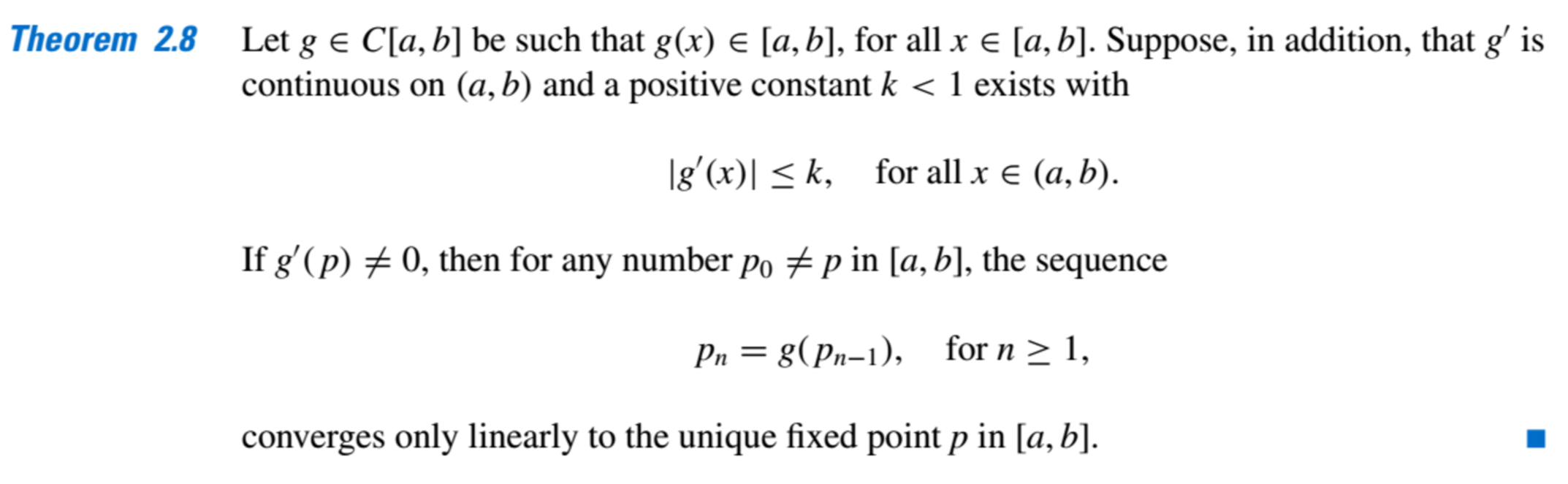

Conditions to ensure Quadratic Convergence:

-

Theorems 2.8 and 2.9 imply:

(i)

(ii)

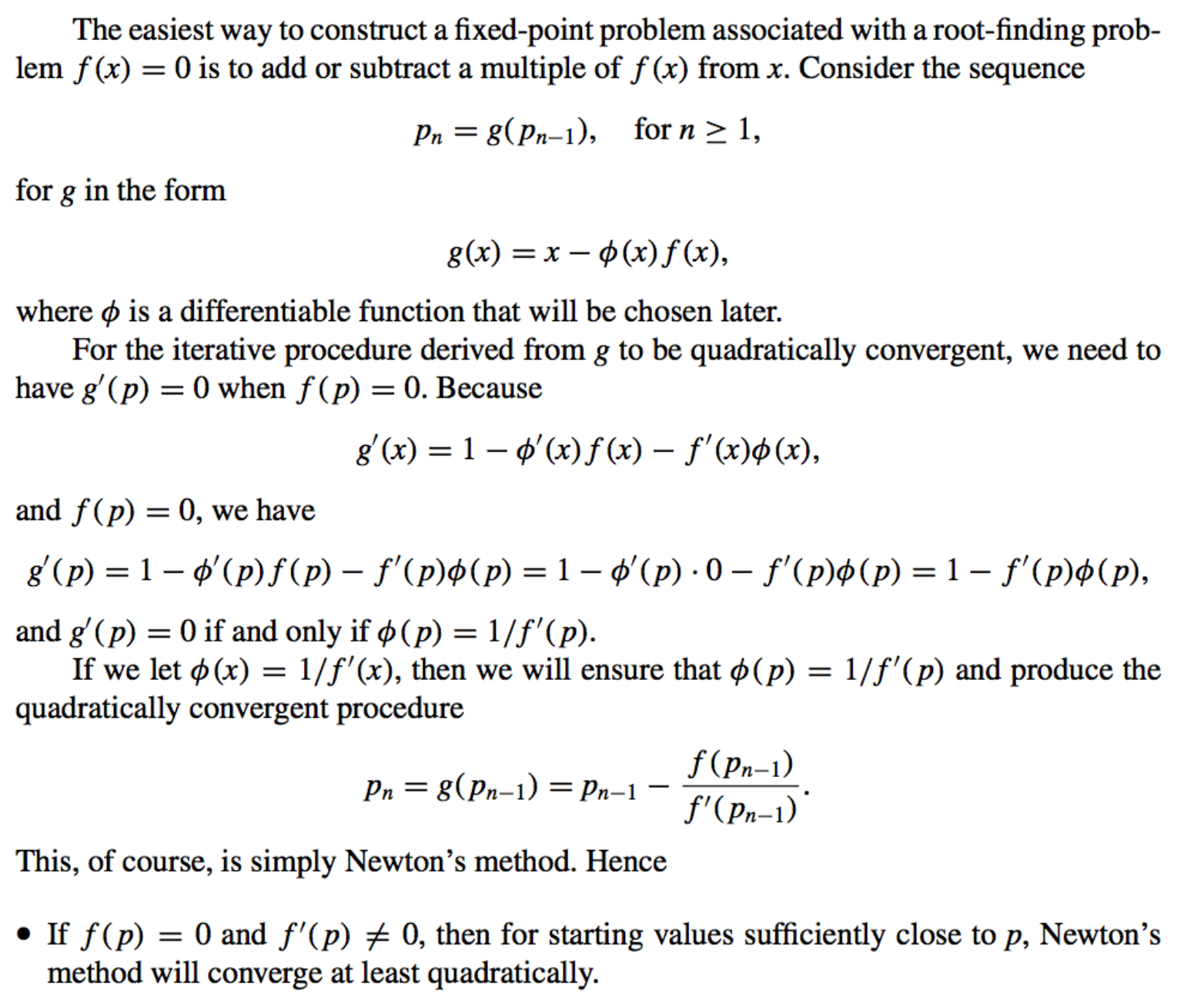

- Newtons’ Method Convergence Rate:

Multiple Roots

-

Problem:

Newton’s method and the Secant method will generally give problems if \(f'( p) = 0\) when \(f ( p) = 0\). -

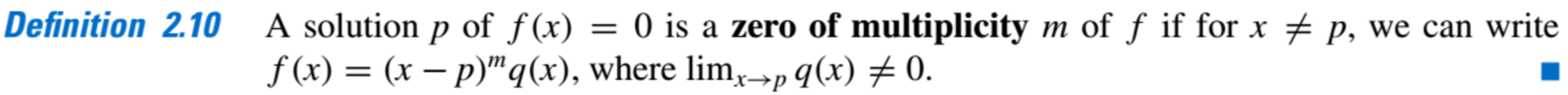

Zeros and their Multiplicity:

- Identifying Simple Zeros:

-

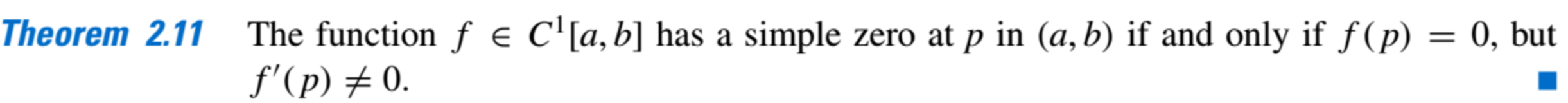

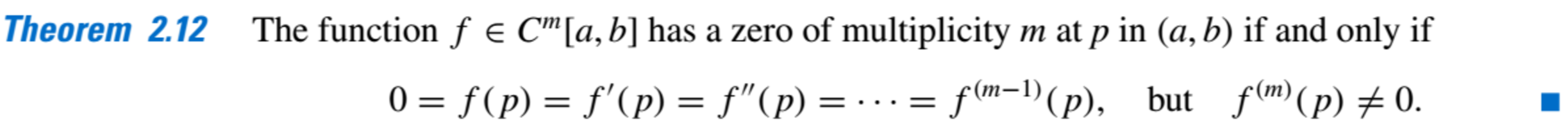

Generalization of Theorem 2.11:

The result in Theorem 2.12 implies that an interval about p exists where Newton’s method converges quadratically to p for any initial approximation \(p_0 = p\), provided that p is a simple zero.

-

- Why Simple Zeros:

Quadratic convergence might not occur if the zero is not simpleExample: Let \(f (x) = e^x − x − 1\) Notice that Newton’s method with \(p_0 = 1\) converges to the zero \(x=0\) but not quadratically

-

Handling the problem of multiple roots:

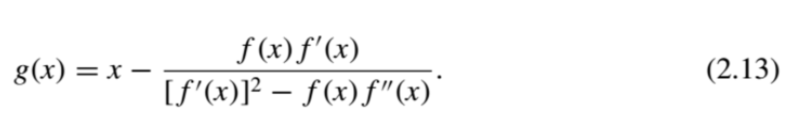

We Modify Newton’s Method

We define \(g(x)\) as:

- Properties:

- If g has the required continuity conditions, functional iteration applied to \(g\) will be quadratically convergent regardless of the multiplicity of the zero of \(f\) .

- Theoretically, the only drawback to this method is the additional calculation of \(f (x)\) and the more laborious procedure of calculating the iterates.

- In practice, multiple roots can cause serious round-off problems because the denominator of (2.13) consists of the difference of two numbers that are both close to 0.

- In the case of a simple zero the original Newton’s method requires substantially less computation.

- Properties: